Tooltester is supported by readers like yourself. We may earn an affiliate commission when you purchase through our links, which enables us to offer our research for free.

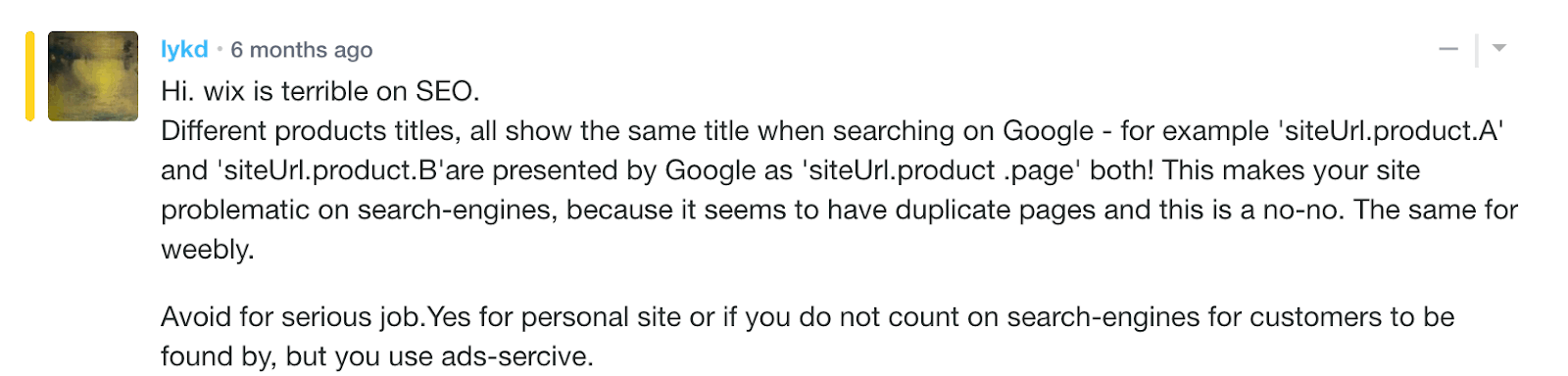

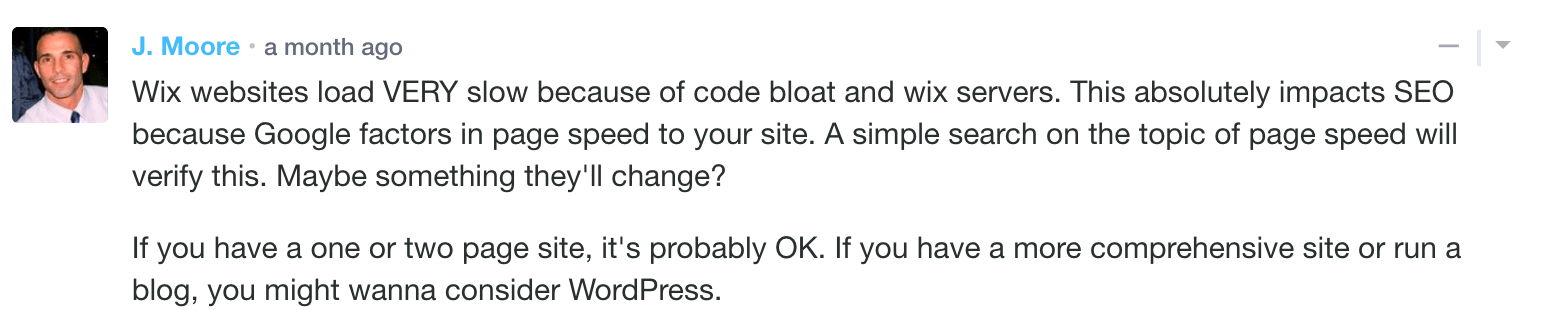

Since I started at Tooltester.com, I’ve probably gotten 4 or 5 pieces of ‘hate’ emails or comments a month concerning SEO and website builders.

After over 4 years, this means I’ve gotten over 200 people complaining about website builders’ SEO.

Mainly I get comments from:

- Unhappy users

- Developers and agencies

- SEO Professionals

But I’ve also seen people very happy with their website builders and their SEO rankings. And I get providers ensuring me that their platforms are 100% SEO friendly.

But of course, in the SEO game, not everyone can be at the top and that generates frustration. For each search query, there can be just one top result among those 10 (or so) blue links. That’s why it’s only logical to start pointing fingers.

I couldn’t find any study that tackled this question: Are website builders really bad for SEO?

So I decided to run an experiment to research this.

What were my hypotheses?

I knew that some people achieved decent rankings with site builders. But I also read a lot of SEO experts being harsh to these platforms’ SEO capabilities.

And truth to be told, each one has its own SEO flaws which (in my opinion) don’t make them a great choice for highly competitive niches (e.g. website builder reviews), where you have to squeeze out every possible bit of SEO superpower.

Some of the comments and emails I get even suggest that sites won’t be indexed when using a website builder like Wix, Jimdo or Weebly.

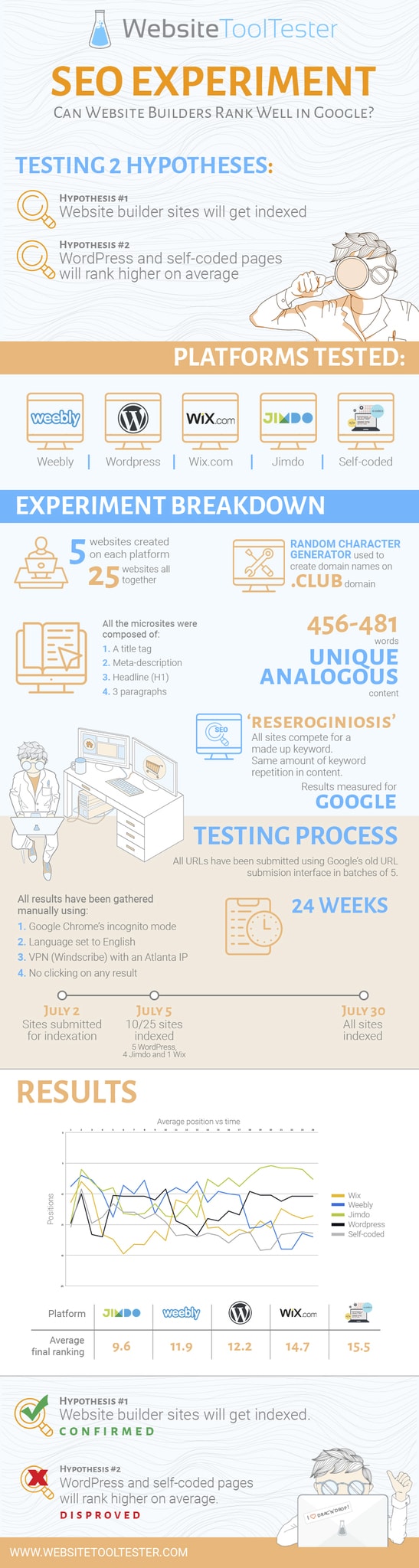

Having these in my mind, I had 2 hypotheses that I wanted to test out:

- Website builder sites will get indexed

- WordPress and self-coded pages will rank higher on average

From my previous experience with website builders and even from some comments made from Google themselves, I was convinced that Wix, Jimdo and Weebly sites would get indexed – provided that good and unique content was used.

In our office, we were evenly divided. One half thought that self-coded and WordPress sites would rank comparatively better than the website builders’ – with the main concerns regarding site builders being speed and cleaner source code. The other half thought that there wouldn’t be any noticeable difference.

Experiment design

Sure, I’ve checked many SEO experiments featured in SEO publications. But being 100% honest, I am not an SEO researcher, so this was a challenge for me.

I had to think long and hard on how to structure this experiment.

I thought that the best approach would be to create several 1-page sites with their own domain, analogous content and design to compete for a made up keyword (‘reseroginiosis’) – no one else besides my boss knew about this keyword.

Platforms used

I decided to test the following setups/platform:

- WordPress **

- Self-coded **

- Wix *

- Weebly *

- Jimdo *

* Note: I used premium plans that removed the site builder ad and allow you to connect a domain name – same conditions for WordPress and self-coded pages.

** Note: The WordPress and custom-built sites were situated on a shared hosting plan provided by SiteGround, akin to the arrangement seen with website builders that also utilize shared hosting.

Since WordPress also has its bad press in terms of SEO (e.g. slowness), I wanted to give it a chance to compare their results to self-coded sites – which are in theory faster.

I created 5 websites for each solution, so I had in total 25 (micro) sites that I’d try to get indexed and ranked for the aforementioned keyword.

Example site built with Weebly

Domain names

To choose the domain names I used a random character generator with the same amount of characters – I divided the sites into groups of 5, one for each approach (e.g. WordPress, Weebly, Jimdo, etc.).

I used .club domain names for all sites, which were purchased from Ionos (formerly 1and1). I also checked that they were new domain names and not expired ones with any SEO history attached.

Search Engine

I only measured results for Google as it’s the (western) search engine leader, and the one we put the most effort into.

It’d probably be interesting to repeat this experiment using other search engines (e.g. Bing or DuckDuckGo) to see if the results match. If you do it, I’d love to read your results.

I gathered the results for 24 weeks, on Mondays. Instead of using a rank tracker tool, I collected the results manually using:

- Google Chrome’s incognito mode

- Language set to English – results changed significantly when set to other languages

- Used a VPN (Windscribe) with an Atlanta IP (always the same)

- Made sure I didn’t click any result

Sidenote: During the experiment, I thought it’d be interesting to also have data for other locations. So from the 10th week onwards, I gathered results for New York and San Francisco too. However, the results were very close to what I was getting in Atlanta so not worth reporting them.

The content

As I mentioned, I wanted the 25 pages to compete for the keyword ‘reseroginiosis’. A made-up technology that would allow people to control devices with their mind – pretty Sci-Fi but cool.

All the microsites were composed of:

- A title tag

- Meta-description

- Headline (H1)

- 3 paragraphs

All pages were between 456 and 481 words long. They were also structured in a similar way (e.g. same amount of keyword repetition). And the content was unique for each of them.

Before starting indexing the pages, they were all tested and checked to ensure compliance with mobile best practices.

Index submission

I used the (no longer available) Google URL submission interface so Google would crawl and index our experiment sites.

I submitted the URLs in batches of 5 (one for each site type) to avoid giving an advantage to one platform (e.g. by submitting all Weebly sites first). I also used a different VPN with U.S. IPs for each submission – not sure this matters too much.

To speed up the indexation process, I also created a post on Tooltester.com with all the URLs linked in the same order as the indexation submission.

Results

Let me show you what results I got after tracking Google for this keyword for more than 160 days.

Indexation

All the sites were submitted for indexation on July 2. On July 5, ten of the twenty-five pages were indexed (5 WordPress, 4 Jimdo and 1 Wix). On July the 30 all pages were finally indexed.

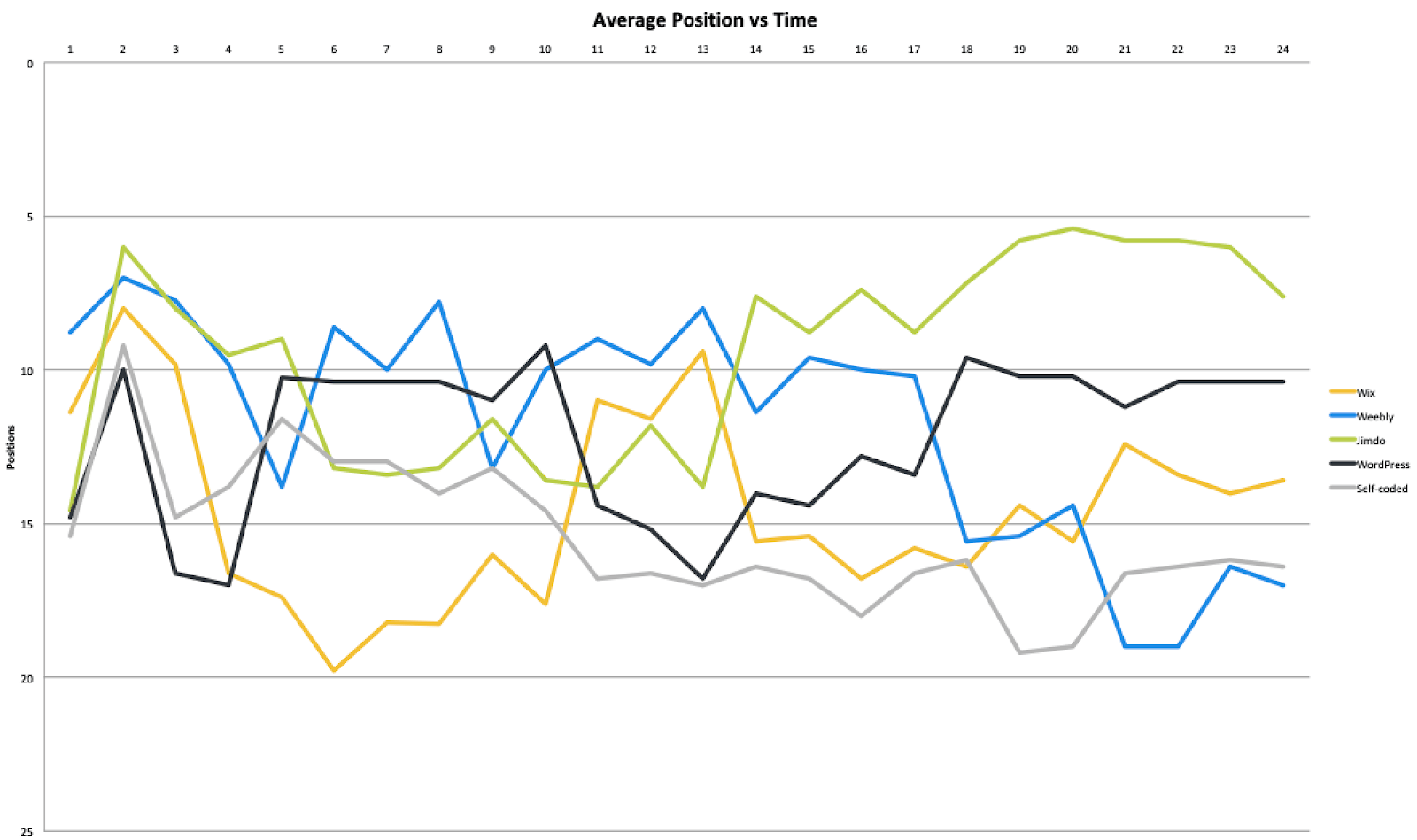

Average position over time

To make data easier to read, I’ve grouped the results per platforms in the following graph. So instead of 25 plot lines there are only 5.

Figure 1

The first thing that comes into mind is how volatile the rankings were – it’s even difficult to follow 5 lines. Of course, it wasn’t easy for Google as there were no user signals that they could interpret (e.g. user CTR). After 14 weeks the rankings seemed a bit more stable for most providers.

If we aggregate every week’s average position by solution, we get the following overall rankings.

| Platform | Average final ranking |

|---|---|

| Wix | 14.7 |

| Weebly | 11.9 |

| Jimdo | 9.6 |

| WordPress | 12.2 |

| Self-coded | 15.5 |

Be aware that this is just a ‘static’ representation of the average ranking that each provider had. If we were to recalculate this now, the results could be significantly different. Hence, don’t take these as definitive winners.

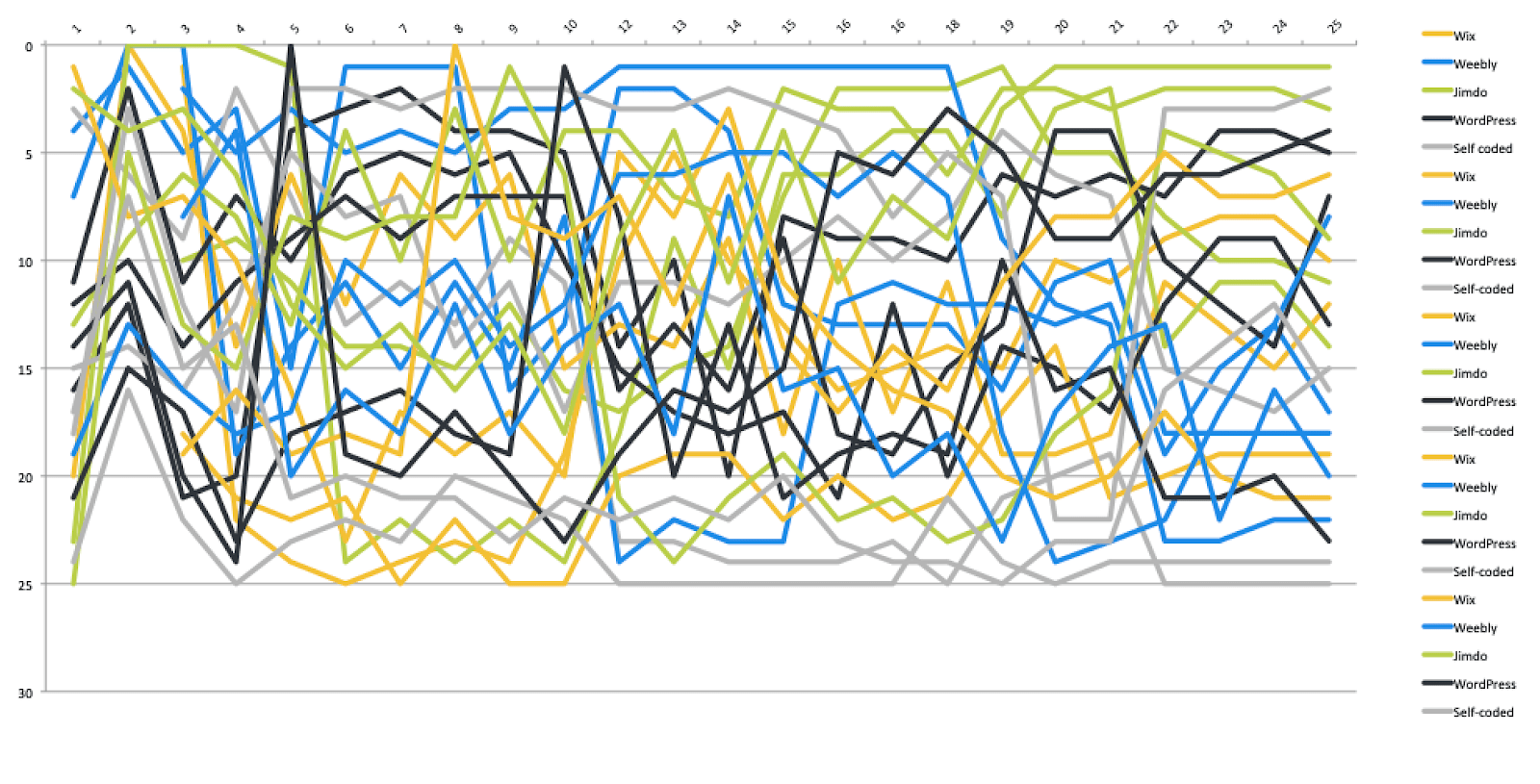

The following graph is a representation of the 25 sites over the experiment period. There’s actually a lot of chaos in the SERP, indicating a high level of volatility.

Figure 2

It’s shocking to see how the average position for Weebly (blue lines) sunk after 17th weeks (29 Oct 2018). There was no Google Algorithm update around those dates according to Moz so I am not sure if Weebly changed something internally. This seemed to affect all the Weebly sites.

Conclusion

First of all, we can see that all the pages were eventually indexed. So we can accept the first hypothesis: ‘Website builder sites will get indexed by Google.

Of course, site builder pages (as any other site) need to obey Google’s guidelines in order to be indexed – and they did. For example, not having enough content or having duplicated content would stop pages from being indexed.

The second hypothesis – whether WordPress or self-coded sites would generally rank higher – is a bit trickier. However, the results don’t show anything that should make us believe that Google prefers a website building approach to another one.

Let’s explore this in more detail:

We can see an extreme level of volatility in the SERPs (Figure 2) and this indicates that the rankings were not decided based on what platform was used to create each page – even if that one was the only major difference between the pages.

Some platforms like Jimdo seemed to have better rankings on average (Figure 1). But if we look at the Top 10 results (Figure 2), there is always a mix of platforms ranking for those top spots.

So we can’t accept hypothesis 2 and it doesn’t seem like having a site built with one of the tested approaches gave any advantage.

Of course, these were oversimplified pages and some aspects were out of my control. But it would appear that Google doesn’t seem to mind too much what platform is used to create content.

The infographics

Check out this infographics summarizing the study results.

List of Webpages

uzhscwzuiubzq.club

gvrprlfozcwcq.club

ohuiezcmpoied.club

kojnrncqoqczw.club

wpeehxctpzfdw.club

rxmkxvgadtwepksb.club

kfjwqgxlvtlwofwx.club

hnjebestfpkqbcyf.club

uocezaohpvvnouno.club

vmzquzxwfvtvqflo.club

zwwvehvuvyjssrtqebq.club

umwrvovbjdrdrwrmtwh.club

bzphgcsbvsnowwxbjft.club

ckmpxzuvzivuacbvlzp.club

podnnhbzdzuwcvvdrae.club

vhkknykutvw.club

qlsbyznvkmi.club

cdbwubojcjy.club

wxzzrnshlln.club

kqtnaflwoip.club

mqartjztcymiligrqyem.club

zdaegrtroxzwojfalehx.club

exwouabdxfptopshqhcp.club

klbrjmxsxcbrejvxmfyu.club

idgngcwqpltubkofzavt.club

THE BEHIND THE SCENES OF THIS BLOG

This article has been written and researched following a precise methodology.

Our methodology